- The Search Landscape Has Changed — Has Your Site?

- What Is Technical SEO? (Quick Answer)

- How Technical SEO Works: The Mechanism Layer

- The Data Behind Technical SEO's Impact

- Traditional vs. Modern Technical SEO Approach

- The CRAVE Framework™: A Proprietary Technical SEO Audit System

- Metrics That Matter: From Rankings to AI Visibility

- How to Conduct a Technical SEO Site Audit using CRAVE Framework

- Your Next Step: From Knowledge to Competitive Advantage

- Frequently Asked Questions

- Technical issues silently block rankings.

The Search Landscape Has Changed — Has Your Site?

Most websites are not losing rankings because of bad content. They are losing because Google cannot reliably crawl, render, or understand what they have built. Technical SEO is no longer a backend checklist — it is the structural foundation that determines whether your content ever reaches the audience it was written for.

In 2024, Google’s Search Quality Rater Guidelines were updated to place greater weight on page experience signals. Simultaneously, AI-powered search experiences — from Google’s AI Overviews to Perplexity and ChatGPT — now pull answers directly from indexed content. Sites that fail technical SEO audits are effectively invisible to both traditional and generative search engines.

The shift is not incremental. It is architectural. A single crawl error can cascade into deindexed pages, suppressed rankings, and zero AI citations. The practitioners who understand this are already pulling away from those who treat technical SEO as an afterthought.

This guide exists to close that gap.

What Is Technical SEO? (Quick Answer)

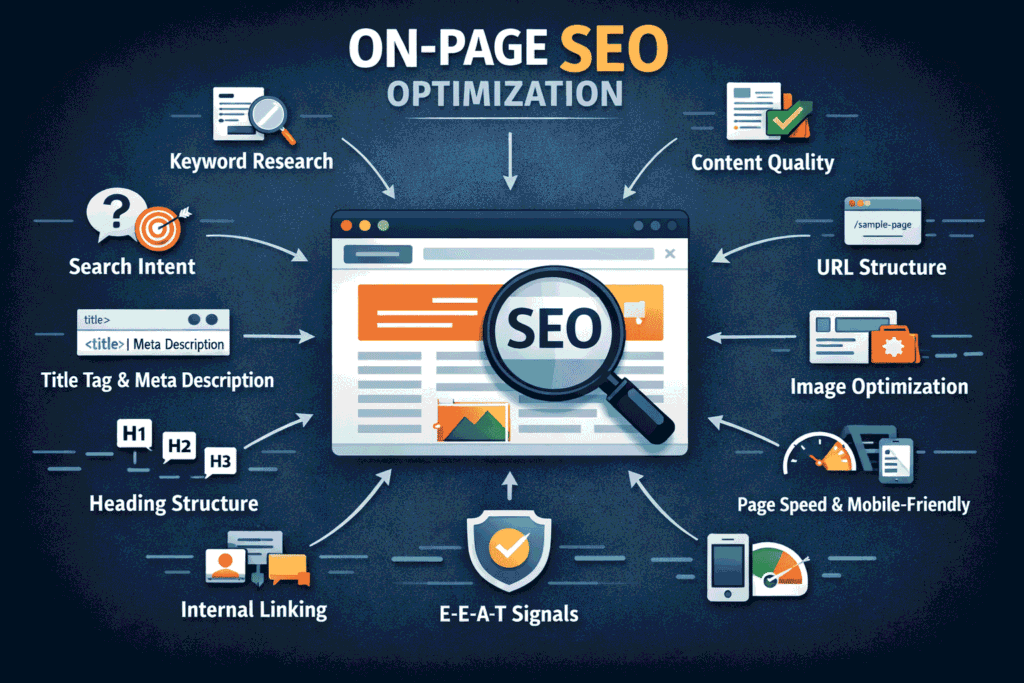

Technical SEO is the process of optimizing a website’s infrastructure — including crawlability, indexability, site speed, structured data, mobile-friendliness, and Core Web Vitals — to ensure search engines can efficiently discover, interpret, and rank its content. Unlike on-page SEO, which focuses on content quality, technical SEO addresses the underlying architecture that governs how search engines interact with a site. A technical SEO audit is a systematic evaluation of these infrastructure elements to identify issues that suppress rankings, block indexation, or degrade user experience. Conducted correctly, it forms the foundation of every high-performing SEO strategy in 2026 and beyond.

How Technical SEO Works: The Mechanism Layer

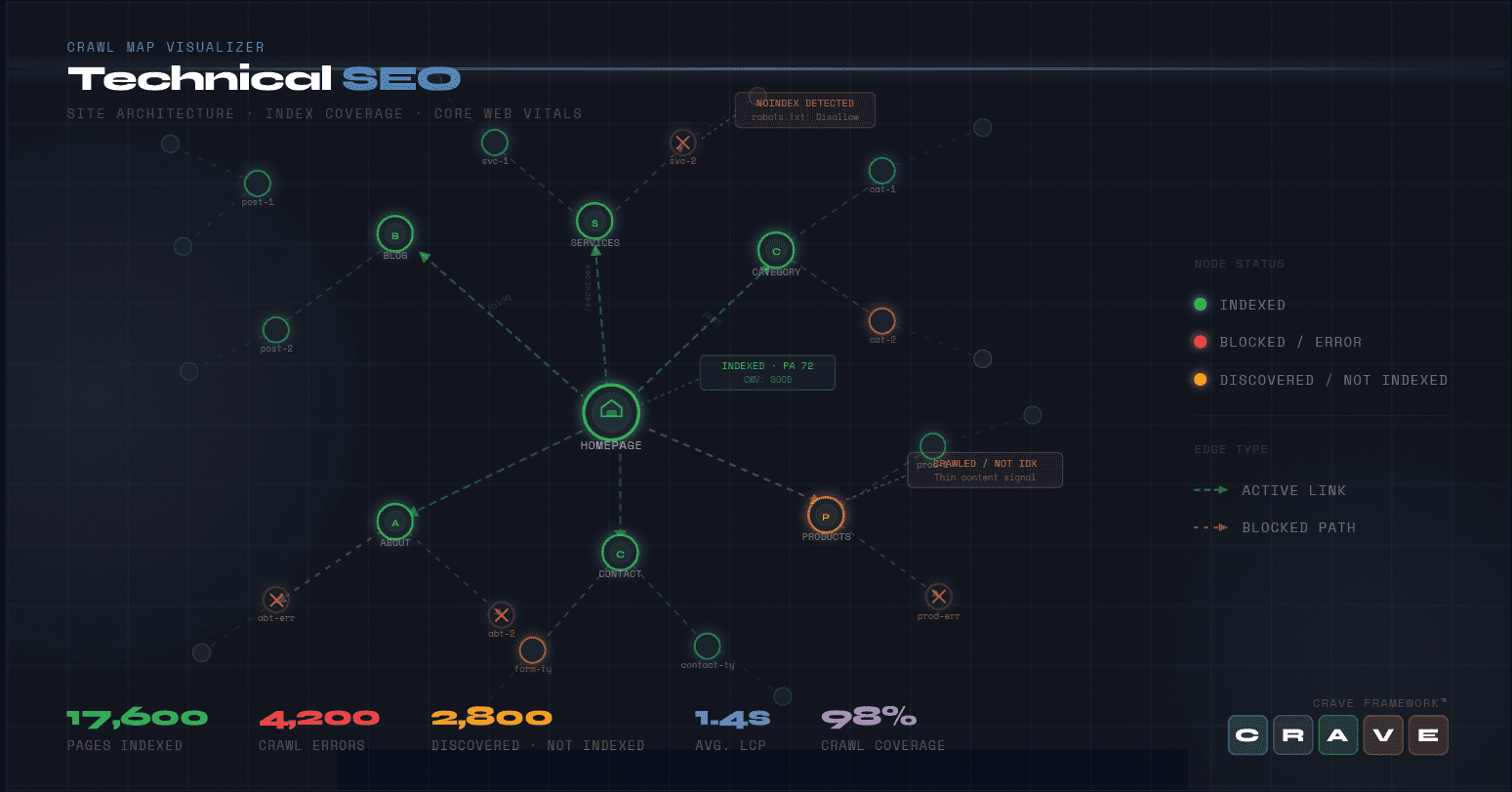

Crawlability: Can Search Engines Find Your Pages?

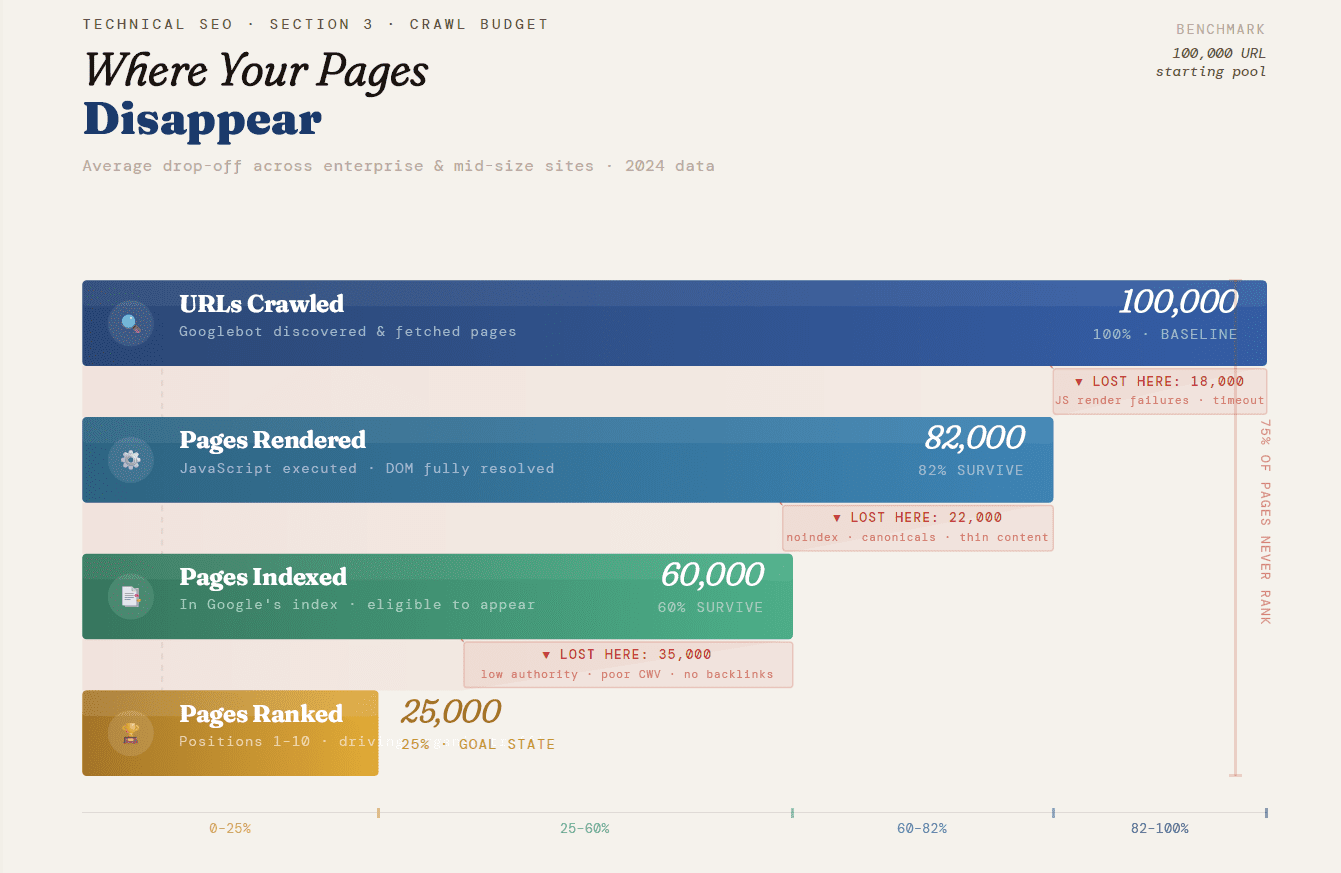

Googlebot and other crawlers navigate websites by following links. If your site architecture creates orphan pages, infinite crawl loops, or blocks key content behind JavaScript rendering, those pages will not be indexed — regardless of how strong the content is. Crawl budget management is especially critical for large e-commerce or enterprise sites where Googlebot’s daily crawl allocation can be exhausted before reaching high-priority pages.

Indexability: Are Your Pages Eligible to Rank?

Crawlability and indexability are distinct. A page can be crawled but explicitly excluded from the index via a noindex tag, a disallow directive in robots.txt, or a canonical tag pointing elsewhere. A thorough technical SEO audit distinguishes between intentional and accidental exclusions — the latter being one of the most common causes of invisible traffic loss.

Metrics are Illustrative

Core Web Vitals: The UX-Ranking Interface

Google’s Core Web Vitals — Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS) — are confirmed ranking signals that measure real-world user experience. Sites scoring in the ‘Poor’ range on LCP (above 4.0 seconds) face measurable ranking suppression in competitive SERPs.

Structured Data: Speaking Search Engine Language

Schema markup communicates context to search engines in machine-readable format. It powers rich results — including FAQ snippets, How-To carousels, product ratings, and sitelinks — and increasingly drives AI citation eligibility. Sites without structured data are structurally disadvantaged in both traditional and generative search.

HTTPS & Security Signals

Google confirmed HTTPS as a ranking signal in 2014. In 2026, a non-HTTPS site triggers browser warnings, increases bounce rates, and signals untrustworthiness to both users and crawlers. Mixed content issues — where an HTTPS page loads HTTP resources — create similar problems without obvious visual indication.

Mobile-First Indexing

Google now indexes the mobile version of your site first. If mobile and desktop content diverge — particularly in headings, structured data, or internal linking — the indexed version may be thinner than intended. This gap is one of the most frequently missed findings in a technical SEO site audit.

The Data Behind Technical SEO’s Impact

These are not theoretical concerns. The research is consistent:

- 53% of mobile visits are abandoned if a page takes longer than 3 seconds to load (Google, Think with Google research). Page speed is not just a ranking signal — it is a revenue signal.

- Semrush’s State of Content Marketing Report found that pages ranking in position 1 on Google load, on average, 1.65 seconds faster than pages ranking in position 10.

- Ahrefs reports that 66.5% of pages have no backlinks. Of those, a substantial portion are suppressed not by link deficiency, but by technical barriers preventing proper indexation.

- Google’s own documentation confirms that crawl budget is limited and sites with high volumes of low-quality or duplicate URLs will see their high-value pages crawled less frequently.

“A 1-second delay in page response can result in a 7% reduction in conversions”. Technical performance is directly tied to commercial outcomes.

Traditional vs. Modern Technical SEO Approach

The discipline has evolved significantly. Here is how the old methodology compares to what elite practitioners execute today:

| Factor | Traditional Approach (Pre-2022) | Modern Approach (2026 and Beyond) |

| Audit Frequency | Annual or one-time | Continuous / automated monitoring |

| Core Web Vitals | Not measured | Primary performance KPI |

| Structured Data | Optional enhancement | Required for AI & rich result visibility |

| Mobile Optimization | Desktop-first design | Mobile-first indexing compliance |

| JavaScript SEO | Ignored or avoided | Critical: render audits via Chrome DevTools |

| Crawl Budget | Relevant only for large sites | Relevant for any site with 500+ pages |

| AI Visibility | Not applicable | Schema, entity clarity & citation readiness |

| Log File Analysis | Rarely performed | Standard in enterprise audits |

| Internal Linking | Manually reviewed | Crawl-depth analysis with tooling |

| Page Experience | Speed as a courtesy metric | INP, LCP, CLS as ranking factors |

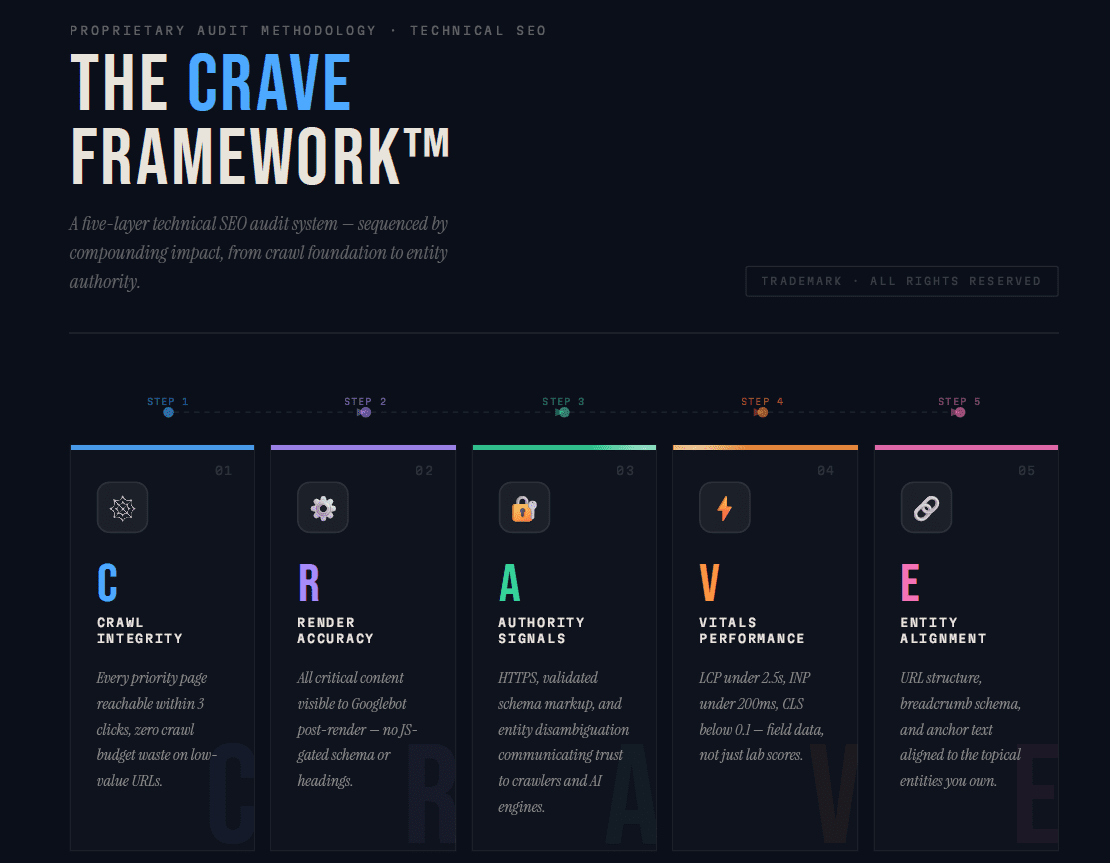

The CRAVE Framework™: A Proprietary Technical SEO Audit System

Most technical SEO checklists are flat lists without prioritization logic. The CRAVE Framework™ is a structured audit methodology designed to sequence technical fixes by their compounding impact — ensuring that foundational issues are resolved before optimization layers are applied.

C — Crawl Integrity

Audit robots.txt, XML sitemaps, crawl depth, and internal link architecture. Identify orphan pages, crawl traps, and disallow directives that may be blocking high-priority URLs. Goal: every important page must be crawlable within 3 clicks from the homepage.

R — Render Accuracy

Validate how Googlebot renders JavaScript-dependent content using Google Search Console’s URL Inspection tool and Chrome’s Rendering tool. Many modern frameworks (React, Vue, Next.js) conditionally load content that crawlers cannot access without proper server-side rendering (SSR) or dynamic rendering configuration.

A — Authority Signals

Evaluate HTTPS implementation, structured data coverage, and entity disambiguation. This layer ensures the site communicates trustworthiness and topical authority to both traditional search and AI citation systems. Structured data schema should be validated via Google’s Rich Results Test and schema.org compliance checks.

V — Vitals Performance

Measure and optimize Core Web Vitals — LCP, INP, and CLS — using Google PageSpeed Insights, Lighthouse, and CrUX (Chrome User Experience Report) data. Field data, not just lab data, drives ranking outcomes. Optimizations here include image format migration (WebP/AVIF), eliminating render-blocking resources, and implementing proper lazy loading.

E — Entity & Experience Alignment

Ensure the site’s topical architecture aligns with the entities and concepts it aims to rank for. This includes URL structure, breadcrumb schema, internal anchor text consistency, and hreflang configuration for multilingual sites. In 2026, entity alignment is the bridge between traditional ranking and AI citation eligibility.

Metrics That Matter: From Rankings to AI Visibility

Traditional KPIs

Organic traffic volume, keyword ranking positions, crawl error counts, index coverage, and bounce rate have been the standard dashboard for years. These remain important but are insufficient on their own. A site can rank #1 for a keyword while losing substantial zero-click share to AI Overviews.

Emerging AI-Era Metrics

- AI citation frequency: How often does your content appear as a cited source in ChatGPT, Perplexity, or Google AI Overviews?

- Featured snippet ownership rate: Percentage of target queries where your site captures the snippet position.

- Crawl-to-index ratio: Of pages Googlebot crawls, what percentage are successfully indexed? A gap signals technical barriers.

- INP (Interaction to Next Paint): The successor to FID, now a Core Web Vital. Measures real-world interactivity.

- Structured data coverage rate: Percentage of eligible pages with validated schema markup.

Visibility Beyond Rankings

Share-of-voice (SOV) measures your brand’s visibility across all relevant search queries — not just the ones you track. In an AI-first search landscape, SOV must now include AI engine presence, knowledge panel ownership, and rich result occupancy. A site can have flat organic rankings while its SOV expands through schema-powered rich results and AI citations.

How to Conduct a Technical SEO Site Audit using CRAVE Framework

A technical SEO site audit is not a single action — it is a sequenced investigation. The goal is to identify every structural barrier preventing search engines from crawling, rendering, indexing, and ranking your content. Here is how to approach it systematically, using the CRAVE Framework™ as your diagnostic backbone.

Step 1: Audit Crawl Integrity

Start with how Googlebot navigates your site. Use a crawl tool — Screaming Frog, Sitebulb, or Ahrefs — to map your entire URL structure and identify crawl depth, orphan pages, and redirect chains. Then pull your server log files and analyse how Googlebot actually behaves versus how you expect it to. Look specifically for crawl budget waste: parameter-generated URLs from faceted navigation, session IDs, or infinite scroll pagination consuming the majority of crawl allocation before reaching your high-priority pages. Every important page should be reachable within three clicks from the homepage.

Step 2: Verify Render Accuracy

Crawlability and renderability are not the same thing. A page can be crawled but return a hollow DOM if it relies heavily on client-side JavaScript. Use Google Search Console’s URL Inspection tool to fetch and render individual pages as Googlebot sees them. Cross-reference with Chrome DevTools to identify content, schema markup, or internal links that only load after user interaction. If critical elements are missing from the rendered output, server-side rendering or dynamic rendering configuration is required.

Step 3: Evaluate Authority Signals

This layer covers everything that communicates trust and structure to search engines. Confirm your site is fully HTTPS with no mixed content issues. Validate all structured data using Google’s Rich Results Test and Schema.org compliance checks — pay particular attention to Product, Article, FAQ, and BreadcrumbList schema types. Check canonical tag implementation across paginated series, filtered URLs, and syndicated content to ensure link equity is consolidating correctly. Audit your XML sitemap to confirm it contains only canonicalized, 200-status, indexed URLs.

Step 4: Measure Vitals Performance

Run your key page templates — homepage, category pages, product or service pages, blog posts — through Google PageSpeed Insights using field data from the Chrome User Experience Report, not just lab scores. Identify your worst-performing pages against the three Core Web Vitals thresholds: LCP under 2.5 seconds, INP under 200 milliseconds, and CLS below 0.1. Common fixes include migrating images to WebP or AVIF format, eliminating render-blocking scripts, implementing proper lazy loading, and reducing third-party tag payloads.

Step 5: Assess Entity Alignment

The final layer examines whether your site’s architecture reinforces the topical entities you want to rank for. Review your URL structure for logical hierarchy and keyword consistency. Audit internal anchor text to confirm it reflects the terms and concepts each destination page targets. Check hreflang configuration for multilingual sites. Ensure breadcrumb schema matches your navigational structure. This layer is what bridges traditional ranking signals with AI citation eligibility — sites with clear entity architecture are systematically more likely to appear in Google AI Overviews and other generative search outputs.

Prioritising Your Findings

Not all audit findings carry equal weight. Once you have completed all five layers, prioritise fixes in CRAVE sequence order — crawl issues first, render issues second, and so on. A perfectly optimised schema implementation has no value on a page Googlebot cannot crawl. Resolving foundational issues before surface-level optimisations is what allows each fix to compound on the last, producing results that isolated changes rarely achieve.

Tools You Will Need

A complete technical SEO audit draws on several tools working in combination: Screaming Frog or Sitebulb for crawl analysis, Google Search Console for index coverage and URL inspection, server log analysis via a tool like Splunk or a dedicated log analyser, Google PageSpeed Insights and Lighthouse for Core Web Vitals, and Google’s Rich Results Test for schema validation.

Your Next Step: From Knowledge to Competitive Advantage

Start with a single audit action: Open Google Search Console and navigate to the ‘Pages’ report under Indexing. If you have any URLs in the ‘Discovered — Not Indexed’ or ‘Crawled — Not Indexed’ bucket, your site has an active technical SEO issue suppressing your organic potential — right now. From there, the CRAVE Framework gives you the exact sequence to resolve issues systematically, without burning time on optimizations that won’t compound. Whether you execute in-house or bring in a specialist, the methodology remains the same. The most expensive technical SEO decision is the one you delay.

Frequently Asked Questions

What is a technical SEO audit?

A technical SEO audit is a comprehensive evaluation of a website’s infrastructure to identify issues that prevent search engines from effectively crawling, rendering, and indexing its content. It covers crawlability, indexation, site speed, Core Web Vitals, structured data, mobile-first compliance, HTTPS security, and internal link architecture. The output is a prioritized list of fixes with measurable impact on organic visibility.

How often should I conduct a technical SEO site audit?

For most sites, a full technical SEO audit should be conducted every 3–6 months, with continuous automated monitoring for crawl errors, index coverage changes, and Core Web Vital regressions in between. After any major site change — platform migration, redesign, or URL restructure — an immediate audit is essential. Large e-commerce or enterprise sites benefit from near-real-time crawl monitoring tools like Screaming Frog, Sitebulb, or ContentKing.

What does a technical SEO checklist include?

A comprehensive technical SEO checklist covers: XML sitemap accuracy, robots.txt configuration, canonical tag implementation, redirect chains and loops, Core Web Vitals (LCP, INP, CLS), mobile-first compliance, HTTPS and mixed content issues, structured data validation, JavaScript rendering verification, crawl depth analysis, internal link architecture, hreflang tags for multilingual sites, and log file analysis for crawl budget efficiency.

How is technical SEO different from on-page SEO?

Technical SEO addresses a site’s infrastructure — the systems that govern how search engines access and process your content. On-page SEO addresses the content itself: keyword placement, heading structure, meta tags, and content relevance. Technical SEO must be resolved first because on-page optimizations have no impact if search engines cannot crawl or index the pages those optimizations live on.

Can technical SEO issues cause a Google ranking drop?

Yes — and they frequently do. Common technical issues that directly cause ranking drops include: accidental noindex tags deployed to production, render-blocking JavaScript hiding content from Googlebot, Core Web Vitals regressions after site updates, duplicate content created by URL parameter issues, and crawl budget waste on low-value URLs preventing high-priority pages from being indexed. Log file analysis and Search Console monitoring are the fastest ways to diagnose these drops.

How does technical SEO affect AI engine visibility?

AI engines like Google AI Overviews, Perplexity, and ChatGPT prefer to cite content that is well-structured, machine-readable, and authoritatively attributed. Technical factors that increase AI citation eligibility include: comprehensive schema markup (especially FAQ, HowTo, and Article schema), fast page load speeds, HTTPS security, clear entity signals through structured internal linking, and content formatted in extractable, self-contained blocks. Sites that perform well technically are systematically more visible in generative search outputs.

Pingback: What is search engine optimization: 2026 guide for growth – Lind Creative